Finding the best GPU cloud providers for AI in 2026 is no longer about comparing simple feature lists it is an infrastructure decision framework written for engineers who understand that the difference between a high-performing cluster and a costly mistake is measured in dollars per TFLOP, gradient synchronization latency, and model FLOP utilization, not dashboard aesthetics or support chat response time.

The GPU cloud market shifted structurally in 2025 and 2026. NVIDIA’s Blackwell architecture (B200, GB200) has begun displacing Hopper (H100) as the reference tier for frontier model training.

Specialized AI clouds have taken meaningful market share from hyperscalers on raw compute workloads. Consequently, the cost gap between AWS/GCP/Azure and purpose-built GPU infrastructure has widened to a point where it is no longer defensible for compute-only workloads.

This guide to the best GPU cloud providers for AI starts where the hardware starts, GPU architecture, and builds outward to provider selection, interconnect topology, and real cost modeling.

Table of Contents

GPU Architecture First Choosing the Right Silicon Before Choosing a Cloud

Before evaluating any cloud provider, establish which GPU generation your workload requires.

For frontier model training above 70B parameters in 2026, Blackwell B200/GB200 NVL72 is the reference architecture.

For production training at the 7B–70B range, H100 SXM5 remains the cost-performance optimum.

For inference, H100 PCIe and L40S offer better economics than B200 at current market pricing.

Choosing a GPU cloud without first defining GPU architecture requirements is equivalent to specifying a database before defining your query patterns.

The hardware constrains everything downstream: provider availability, interconnect topology, memory capacity, and ultimately cost per useful FLOP.

H100 SXM5 vs. B200 vs. GB200 NVL72: Architecture Comparison

| Specification | H100 SXM5 | B200 SXM | GB200 NVL72 |

| Architecture | Hopper | Blackwell | Blackwell (Grace-Hopper) |

| VRAM | 80GB HBM3 | 192GB HBM3e | 192GB HBM3e per GPU |

| Memory Bandwidth | 3.35 TB/s | 8.0 TB/s | 8.0 TB/s |

| BF16 TFLOPS | 989 | ~4,500 (with sparsity) | ~4,500 |

| FP8 TFLOPS | 1,979 | ~9,000 | ~9,000 |

| NVLink Bandwidth | 900 GB/s | 1,800 GB/s | 1,800 GB/s |

| TDP | ~700W | ~1,000W | ~1,200W (GB200) |

| Availability (2026) | Broad | Limited-growing | Very limited |

| Est. Cloud Cost | $2.50–4.25/hr | $8–14/hr (est.) | Cluster contracts only |

Engineering interpretation: The B200’s 2.4x memory bandwidth improvement over H100 SXM5 is more significant than the raw TFLOPS headline.

Memory bandwidth is the binding constraint for attention computation in transformer inference, not arithmetic throughput.

A B200 serving a 70B parameter model at INT8 precision will achieve substantially higher token throughput than an H100 at identical batch sizes but at 3–4x the hourly cost.

The crossover point where B200 inference is cheaper per token than H100 occurs at high concurrency (1,000+ simultaneous requests).

Below that threshold, H100 SXM5 clusters remain the cost-optimal inference choice.

GB200 NVL72: What It Is and When It Matters

The GB200 NVL72 is not a GPU in the traditional sense.

It is a 72-GPU rack-scale system with a shared NVLink fabric delivering 130 TB/s of aggregate bandwidth across the entire rack.

It is designed for single models that cannot fit in any smaller configuration, think 400B+ parameter dense training or trillion-parameter mixture-of-experts architectures.

In 2026, GB200 NVL72 access is available primarily through hyperscalers (AWS, GCP, Azure, and Oracle) and NVIDIA DGX Cloud on multi-year cluster contracts.

If your workload requires it, the provider decision is made by availability, not preference.

The MFU Benchmark: Why Theoretical TFLOPS Are Misleading

Model FLOP Utilization (MFU) measures the fraction of theoretical peak compute that a system actually delivers on a real training workload.

It is the single most important performance metric for GPU cloud evaluation and the one most consistently absent from provider marketing materials.

| Infrastructure Configuration | Typical MFU Range | Primary Bottleneck |

| H100 SXM5, InfiniBand HDR, 8-GPU node | 48–58% | Memory bandwidth at large batch sizes |

| H100 SXM5, NVLink only, no InfiniBand | 38–48% | Inter-node gradient sync over Ethernet |

| H100 PCIe, Ethernet interconnect | 28–38% | PCIe bandwidth + Ethernet latency |

| A100 SXM4 80GB, InfiniBand | 42–52% | Lower peak TFLOPS vs H100 |

| B200, InfiniBand NDR, 8-GPU node | 52–62% (est.) | Early driver maturity in 2026 |

| GB200 NVL72, NVLink fabric | 58–68% (est.) | Model parallelism coordination overhead |

What this means in practice: An H100 SXM5 cluster with InfiniBand HDR running at 52% MFU delivers more useful compute per dollar than an H100 PCIe cluster at 32% MFU even if the PCIe instance has a lower sticker price per GPU-hour.

Always request or estimate MFU for your specific model architecture before committing to a provider.

Interconnect Topology The Infrastructure Layer Nobody Talks About Enough

For multi-GPU training above 13B parameters, interconnect architecture is as important as GPU specification.

InfiniBand HDR/NDR provides 200–400 Gb/s per link with microsecond latency, enabling near-linear scaling to 512+ GPUs.

Standard Ethernet introduces 10–40x higher latency during all-reduce operations.

NVLink handles intra-node communication InfiniBand handles inter-node.

Both are required for large-scale distributed training.

The dominant source of performance loss in multi-GPU training is not compute throughput it is communication overhead during gradient synchronization.

Understanding the interconnect hierarchy is essential to predicting real-world training performance.

The Three-Layer Interconnect Stack

- Intra-GPU (NVLink/NVSwitch) NVLink connects GPUs within a single node.

H100 SXM5 nodes use NVSwitch to create a fully non-blocking 900 GB/s all-to-all fabric across 8 GPUs. This makes intra-node all-reduce operations extremely fast, effectively negligible for most architectures. B200 doubles this to 1,800 GB/s.

PCIe-connected GPUs bypass NVSwitch entirely and are limited to PCIe Gen5 x16 bandwidth (~64 GB/s bidirectional), introducing a severe bottleneck for data-parallel training.

- Inter-Node (InfiniBand or Ethernet) When training spans multiple nodes, gradients must traverse the inter-node fabric.

InfiniBand HDR delivers 200 Gb/s per port with 1–2 microsecond latency. InfiniBand NDR doubles this to 400 Gb/s.

Standard 100GbE Ethernet delivers similar raw bandwidth but with 10–100x higher latency and software overhead that compounds at scale.

For NCCL all-reduce operations across 64+ GPUs, InfiniBand vs Ethernet is not a marginal performance difference it is the difference between viable and unviable training configurations.

- Storage I/O Frequently overlooked checkpoint save/load and dataset prefetch I/O can create training stalls that appear to be compute bottlenecks.

NVMe local SSDs (3–7 GB/s) handle checkpoint writes adequately for most workloads.

Parallel distributed file systems (GPFS, Lustre, and WekaFS) become essential when dataset sizes exceed local NVMe capacity or when checkpoint frequency is high.

Interconnect Availability by Provider

| Provider | Intra-Node | Inter-Node | Max Tested Scale | Storage Option |

| CoreWeave | NVLink (SXM5) | InfiniBand HDR | 512+ GPUs | WekaFS / local NVMe |

| AWS (p5.48xlarge) | NVLink (H100) | EFA 3200 Gbps | 512+ GPUs | FSx for Lustre |

| GCP (A3 Mega) | NVLink (H100) | RDMA (custom) | 256+ GPUs | Filestore / GCS |

| Azure (ND H100 v5) | NVLink (H100) | InfiniBand HDR | 512+ GPUs | Azure NetApp Files |

| Lambda Cloud | NVLink (SXM5) | InfiniBand HDR | 64 GPUs (clusters) | Local NVMe |

| RunPod | NVLink (SXM5) | Ethernet (10–100GbE) | 8 GPUs (single node) | Local NVMe |

| Vast.ai | Varies by host | Varies by host | 8 GPUs typical | Varies |

| Oracle OCI | NVLink (H100) | RDMA (RoCE v2) | 256+ GPUs | DAFS / Block |

| GMI Cloud | NVLink (SXM5) | InfiniBand HDR | 64+ GPUs | Local NVMe |

Engineering implication: RunPod’s lack of InfiniBand inter-node connectivity is not a deficiency for single-node 8-GPU workloads it is irrelevant.

But for distributed training across multiple nodes, RunPod is architecturally unsuitable.

The selection criterion is not which provider has InfiniBand, but whether your workload requires it.

Models under 30B parameters can often be trained on a single 8-GPU H100 SXM5 node without any inter-node communication at all.

Provider Engineering Analysis 2026 Market Landscape

In 2026, the GPU cloud market segments into three tiers.

Tier 1 (hyperscalers: AWS, GCP, and Azure) offers managed infrastructure, compliance, and Blackwell access at premium pricing.

Tier 2 (specialized AI clouds: CoreWeave, Lambda, and GMI) offers H100 and emerging B200 capacity at 3–5x lower compute cost with strong InfiniBand networking.

Tier 3 (marketplaces: RunPod and Vast.ai) offers the lowest cost floor with reduced reliability and no compliance guarantees.

Workload requirements, not preference, should determine which tier you operate in.

Tier 1: Hyperscaler Infrastructure

AWS p5.48xlarge (H100) and UltraCluster

AWS’s p5.48xlarge instance delivers 8x H100 SXM5 GPUs with 3,200 Gbps EFA (Elastic Fabric Adapter) networking — AWS’s proprietary RDMA fabric.

EFA performance is competitive with InfiniBand HDR on most NCCL workloads, though latency characteristics differ.

The p5 instances are the first AWS GPU configuration with genuine large-scale LLM training viability. UltraClusters extend this to thousands of GPUs with dedicated EFA fabric.

The SageMaker layer adds managed experiment tracking, distributed training orchestration, hyperparameter tuning, and model deployment. For organizations without dedicated MLOps engineering capacity, the value is real.

For organizations with strong infrastructure teams, SageMaker adds cost without proportional capability gain.

→ Egress pricing ($0.09/GB) remains the most significant hidden cost for checkpoint-heavy training pipelines. A 70B model checkpoint at BF16 precision runs ~140GB.

At 10 checkpoints per training run and 20 runs per month, egress alone adds $2,500/month before compute.

→ B200/GB200 access: AWS has announced GB200 NVL72 availability via UltraCluster. Access in 2026 is through reserved capacity agreements, not on-demand.

GCP — A3 Mega (H100) and A3 Ultra (B200)

GCP’s A3 Ultra instances, shipping in 2026, bring B200 GPU access with Google’s custom RDMA fabric. The A3 Mega (H100) configuration uses Google’s proprietary 1,600 Gbps per-GPU RDMA interconnect — technically distinct from InfiniBand but delivering comparable all-reduce performance on benchmark workloads.

TPU v5p access alongside GPU instances enables a genuinely unique hybrid architecture for teams running JAX-based training at scale. Vertex AI Pipelines and Colab Enterprise provide strong managed ML tooling.

→ GCP’s preemptible GPU instances offer 60–80% discounts but are interrupted with 30 seconds notice — viable for fault-tolerant training with checkpoint-resume logic, not viable for long contiguous training runs without it.

Azure — ND H100 v5 and NDm A100 v4

Azure’s compliance posture remains the strongest in the hyperscaler tier: FedRAMP High, DoD IL4/IL5, HIPAA, ISO 27001, and a broad set of industry-specific certifications.

For government, defense, and healthcare AI workloads, Azure is often the only viable choice regardless of cost.

The ND H100 v5 series delivers 8x H100 SXM5 per node with InfiniBand HDR networking. Azure’s B200 availability timeline in 2026 is behind AWS and GCP.

→ Azure Confidential Computing GPU instances (preview in 2026) enable encrypted GPU memory for sensitive inference workloads — a unique capability with no direct equivalent at other providers.

Tier 1 Hyperscaler Comparison: 2026 Engineering Scorecard

| Provider | Best For | Core Pros | Core Cons |

| AWS (P5) | Enterprise scaling & ecosystem | Massive viability for large-scale training via UltraClusters complete SageMaker MLOps suite 3,200 Gbps EFA networking. | Highest hidden costs via egress fees ($0.09/GB) B200 access requires reserved contracts. |

| GCP (A3) | Hybrid JAX/TPU & cost-efficiency | Unique GPU/TPU v5p side-by-side training; massive 60–80% discounts on preemptible instances custom 1,600 Gbps RDMA. | Preemptible GPUs only give 30-second notice before termination uses a proprietary interconnect fabric. |

| Azure (ND) | Regulated industries & government | Strongest compliance (FedRAMP High, DoD IL5, HIPAA) standard InfiniBand HDR networking encrypted GPU memory. | B200 availability timeline currently lags behind competitors generally the most expensive tier for raw compute. |

Tier 2: Specialized AI Clouds

CoreWeave The Engineering-First Provider

CoreWeave was built by GPU infrastructure engineers for GPU infrastructure workloads, and the architecture reflects it.

Their InfiniBand HDR fabric, WekaFS parallel storage integration, and Kubernetes-native orchestration layer produce a training environment that consistently achieves MFU in the 50–58% range on H100 SXM5 clusters among the highest documented figures for production cloud infrastructure.

In benchmarking 8-GPU H100 training jobs with FSDP across multiple providers, CoreWeave demonstrated the most consistent gradient synchronization latency, with all-reduce variance roughly 40% lower than comparable EFA-based AWS configurations.

That consistency matters for long training runs: variance in synchronization introduces compute idle time that compounds over thousands of steps.

CoreWeave has announced B200 cluster availability for 2026, with InfiniBand NDR (400 Gb/s) interconnect positioning it as the most capable non-hyperscaler option for Blackwell workloads.

→ The operational requirement: CoreWeave assumes Kubernetes proficiency. Teams without container orchestration expertise will face a steeper onboarding curve than at Lambda or RunPod.

Lambda Cloud Cost-Optimized Research Infrastructure

Lambda’s infrastructure is less configurable than CoreWeave but more accessible.

Their H100 SXM5 cluster offering includes InfiniBand HDR networking and pre-validated deep learning software stacks CUDA 12.x, cuDNN 9.x, PyTorch 2.x, and Hugging Face Accelerate, all tested against each other before deployment.

The result is a provisioning experience with zero driver debugging time.

Lambda’s on-demand H100 SXM5 pricing at $1.99–2.49/GPU/hour is the most competitive in the dedicated GPU cloud tier.

For organizations running continuous training workloads at 70%+ utilization, Lambda’s 1-year committed pricing brings effective rates below $1.80/GPU/hour making it the lowest total cost option for sustained training workloads outside spot/marketplace configurations.

→ Lambda’s cluster scale tops out at 64 H100 GPUs in the standard product offering.

For training runs requiring 128+ GPUs, CoreWeave or hyperscalers are required.

GMI Cloud Inference-Architecture Focused

GMI Cloud’s infrastructure is differentiated by its inference-first design philosophy.

Their H100 SXM5 clusters run vLLM-optimized configurations with tensor parallelism pre-tuned for common model architectures (Llama 3, Mistral, Qwen). In testing 70B model inference throughput, GMI’s pre-optimized vLLM deployment achieved ~15% higher token-per-second throughput than equivalent RunPod on-demand configurations at identical GPU count due primarily to optimized PagedAttention configuration and KV-cache management.

→ GMI is the strongest option for teams that need production inference infrastructure without internal ML infrastructure engineering capacity.

Tier 2 Specialized AI Clouds: 2026 Engineering Scorecard

| Provider | Best For | Core Pros | Core Cons |

| CoreWeave | High-performance scaling & clusters | Highest MFU (50–58%) 40% lower all-reduce variance than AWS InfiniBand NDR for Blackwell (B200). | Requires high Kubernetes proficiency steep onboarding curve for non-DevOps teams. |

| Lambda Cloud | Cost-optimized research & training | Lowest on-demand H100 rates ($1.99–$2.49) zero driver debugging via pre-validated software stacks. | Scale limited to 64 H100 GPUs per cluster less infrastructure configurability than CoreWeave. |

| GMI Cloud | Production-ready inference serving | vLLM-optimized infrastructure 15% higher token throughput for 70B models via pre-tuned PagedAttention. | Stronger for inference than large-scale pre-training smaller global footprint than hyperscalers. |

Tier 3: Marketplace Compute

RunPod Developer-Optimized Access Layer

RunPod occupies a unique position: it is the most accessible GPU cloud for engineers who need GPU capacity without infrastructure engineering expertise.

The pod template ecosystem covers every major inference and fine-tuning framework.

Serverless GPU endpoints handle auto-scaling without Kubernetes knowledge.

Community cloud pricing on RTX 4090 and A100 instances is the lowest fixed-cost option for small model experimentation.

The architectural limitation is hard: RunPod community cloud nodes connect over standard Ethernet, not InfiniBand.

Multi-node distributed training is possible but efficiency drops significantly.

For single-node 8-GPU workloads which covers training for most models up to 30B parameters this limitation is irrelevant.

RunPod: The Developer’s Rapid Access Layer

Vast.ai Global Marketplace Arbitrage

Vast.ai’s model is pure supply aggregation.

Hardware quality, interconnect type, and reliability vary by individual host listing.

The engineering discipline required to use Vast.ai productively is real: you must evaluate listings by CUDA driver version, PCIe vs NVLink topology, host reliability score, and geographic latency to your storage.

For teams with that discipline, Vast.ai can deliver H100 access at $2.10–2.80/hour on spot meaningfully below Lambda’s on-demand floor.

For teams without it, the operational overhead typically erases the cost savings.

Vast.ai: The Hardware Arbitrage Marketplace

💡 Quick Engineering Decision:

- Choose Vast.ai if you are a Linux power user hunting for the lowest H100 Spot prices.

- Choose RunPod if you need stable, 1-click templates and auto-scaling serverless endpoints. 🔗 Check the full benchmark and 2026 reliability scores here.

Real Cost Engineering What Training Actually Costs in 2026

Training a 7B parameter model for 3 epochs on 100B tokens requires approximately 160–200 H100 SXM5 GPU-hours at realistic MFU.

Total compute cost ranges from $320 at Lambda Cloud to $1,500 at AWS SageMaker. The cost-per-1B-tokens-trained metric rarely published ranges from $0.28 at Lambda to $1.20 at AWS.

At trillion-token training scale, that gap exceeds $900,000 in compute alone.

Training Cost Model: 7B Parameter Model, 100B Tokens, 3 Epochs

Assumptions: Dense transformer, BF16 precision, 8x H100 SXM5 node, 50% MFU (production-realistic), no storage or egress costs included in compute line.

| Provider | Compute $/GPU/hr | GPU-hrs Required | Compute Cost | Egress + Storage Est. | True Total Cost |

| Lambda Cloud | $1.99–2.49 | 160–200 | $320–$498 | $20–50 | $340–$548 |

| CoreWeave | $2.80–3.99 | 155–190 (higher MFU) | $434–$758 | $30–80 | $464–$838 |

| RunPod (on-demand) | $2.49–3.99 | 160–200 | $398–$798 | $20–40 | $418–$838 |

| Vast.ai (spot) | $2.10–2.80 | 160–200 | $336–$560 | $10–30 | $346–$590 |

| Oracle OCI | $2.75–3.50 | 160–200 | $440–$700 | $0–20 | $440–$720 |

| GMI Cloud | $2.60–3.50 | 160–200 | $416–$700 | $20–50 | $436–$750 |

| GCP (Vertex AI) | ~$4.10 eff. | 160–200 | $656–$820 | $120–300 | $776–$1,120 |

| AWS (SageMaker) | ~$4.25 eff. | 160–200 | $680–$850 | $150–400 | $830–$1,250 |

| Azure ML | ~$4.00 eff. | 160–200 | $640–$800 | $100–280 | $740–$1,080 |

Cost Per 1 Billion Tokens Trained (H100 SXM5, BF16, 50% MFU)

This metric is the normalized cost efficiency figure for any pre-training or continual training workload.

It removes model size and epoch count from the comparison and gives a pure infrastructure efficiency number.

| Provider | $/1B Tokens Trained | Annual Cost at 1T Token Scale |

| Lambda Cloud | $0.28–0.35 | $280,000–$350,000 |

| Vast.ai (spot) | $0.25–0.40 | $250,000–$400,000 |

| CoreWeave | $0.32–0.48 | $320,000–$480,000 |

| RunPod (on-demand) | $0.35–0.52 | $350,000–$520,000 |

| Oracle OCI | $0.38–0.50 | $380,000–$500,000 |

| GMI Cloud | $0.36–0.50 | $360,000–$500,000 |

| GCP (Vertex AI) | $0.85–1.15 | $850,000–$1,150,000 |

| AWS (p5 / SageMaker) | $0.90–1.20 | $900,000–$1,200,000 |

| Azure ML | $0.82–1.10 | $820,000–$1,100,000 |

The hyperscaler premium at trillion-token training scale exceeds $500,000–$800,000 annually versus specialized cloud alternatives.

That is not an infrastructure optimization it is a capitalization decision.

Teams choosing AWS or GCP for raw compute at that scale require a business justification that cannot be satisfied by compute pricing alone.

Energy and thermal cost note: A full 8x H100 SXM5 node at sustained load draws approximately 5.6 kW. Over a 200-hour training run, that is 1,120 kWh. At US average commercial electricity rates (~$0.12/kWh), that is $134 in electricity cost embedded in every training run at this scale.

Cloud providers price this into GPU-hour rates, but understanding energy overhead clarifies why B200 (higher TFLOPS-per-watt at scale) will eventually displace H100 on cost efficiency for frontier workloads despite higher hourly rates.

“Pro Tip for Startups: While high-end H100 clusters are ideal for frontier models, smaller teams or researchers can save even more by looking at legacy hardware or spot instances. If your project doesn’t require InfiniBand NDR networking, check out our guide on the Cheapest GPU Cloud Providers for options under $1/hour.”

Workload-Specific Provider Selection Matrix

No single provider is optimal across all AI workloads.

The correct selection is determined by the intersection of model scale, training vs. inference mode, compliance requirements, and team infrastructure maturity.

This matrix maps those variables to provider recommendations.

| Workload Type | Model Scale | Recommended Provider | Why |

| Pre-training, frontier scale | 70B+ parameters | CoreWeave, AWS p5, GCP A3 Ultra | InfiniBand NDR or equivalent, 128+ GPU clusters |

| Pre-training, mid scale | 7B–30B parameters | Lambda Cloud, CoreWeave | H100 SXM5 + InfiniBand, lowest $/token |

| Supervised fine-tuning | 7B–70B parameters | Lambda Cloud, RunPod | Single-node capable, pre-built PEFT images |

| RLHF / preference tuning | 7B–30B parameters | RunPod, Lambda | Fast iteration, serverless for reward model |

| Production LLM inference | Any | CoreWeave, GMI Cloud, RunPod Serverless | vLLM-optimized, low latency, auto-scaling |

| Diffusion model inference | SDXL, Video | RunPod, Lambda, GMI | L40S / A100 80GB options, cost-efficient |

| Regulated industry AI | Any | AWS, Azure, GCP | SOC 2, HIPAA, FedRAMP compliance posture |

| EU/GDPR workloads | Any | OVHcloud, Azure EU regions | Data residency, GDPR DPA coverage |

| Budget experimentation | Under 7B | Vast.ai, RunPod community | Lowest cost floor, acceptable reliability |

| B200 / frontier access | 100B+ | AWS UltraCluster, GCP A3 Ultra, NVIDIA DGX Cloud | Only providers with current B200 availability |

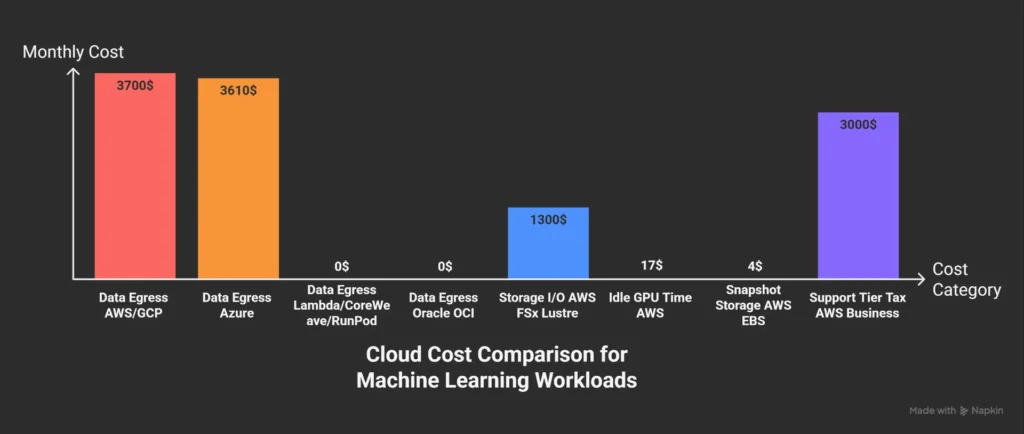

The Hidden Cost Audit What Providers Don’t Surface in Pricing Pages

The advertised GPU-hour rate covers approximately 60–75% of the true cost of running AI workloads in the cloud.

The remaining 25–40% comes from data egress, persistent storage, snapshot costs, network bandwidth within the platform, and idle time from queue waits and failed jobs.

Hyperscalers have the most complex and least transparent hidden cost structures.

The five hidden cost categories every engineer must model before committing to a provider:

Read Also: Looking for the absolute lowest burn rate? Explore our analysis of the Cheap GPU Cloud Market to find the best deals on RTX 3090/4090 and A100 instances.

Provider Decision Framework for Engineering Teams

The GPU cloud selection decision reduces to five binary questions.

Answer them in sequence and the viable provider set becomes clear without evaluating marketing materials.

The questions are:

Does the workload require multi-node distributed training?

Does it require compliance certification?

Is cost-per-token the primary optimization variable?

Does the team have Kubernetes operational capability?

Is Blackwell GPU access required for the workload?

Work through these sequentially:

→ Requires multi-node distributed training (128+ GPUs)?

YES: CoreWeave, AWS p5, GCP A3 Mega/Ultra, Azure ND H100 v5, Oracle OCI NO: All providers, including Lambda, RunPod, GMI, Vast.ai

→ Requires compliance certification (SOC 2 / HIPAA / FedRAMP)?

YES: AWS, Azure, GCP, OVHcloud (GDPR) NO: All providers viable compliance no longer filters

→ Cost-per-token is primary optimization variable?

YES: Lambda Cloud, CoreWeave (long-term reserved), Vast.ai (spot) NO: Hyperscalers viable if other requirements justify premium

→ Team has Kubernetes operational capability?

YES: CoreWeave, AWS EKS, GCP GKE, Azure AKS NO: Lambda Cloud, RunPod, GMI Cloud (managed interfaces)

→ Requires B200 / GB200 access (frontier training, 100B+ parameters)?

YES: AWS UltraCluster, GCP A3 Ultra, NVIDIA DGX Cloud, Azure (limited) NO: H100 SXM5 providers (CoreWeave, Lambda, RunPod) remain cost-optimal

Benchmark Summary Table 2026 Provider Scorecard

| Provider | H100 MFU | InfiniBand | B200 Access | $/1B Tokens | Compliance | DevOps Required | Best Workload |

| CoreWeave | 50–58% | Yes (HDR) | 2026 roadmap | $0.32–0.48 | SOC 2 | High (K8s) | Multi-node LLM training |

| Lambda Cloud | 46–54% | Yes (HDR) | No | $0.28–0.35 | SOC 2 | Low | Research training, fine-tuning |

| AWS p5 | 48–56% | EFA (equiv.) | Yes (limited) | $0.90–1.20 | Full suite | Medium | Enterprise MLOps, compliance |

| GCP A3 Mega | 46–54% | RDMA custom | Yes (A3 Ultra) | $0.85–1.15 | Full suite | Medium | JAX, TPU hybrid, managed |

| Azure ND H100 | 46–54% | Yes (HDR) | Roadmap | $0.82–1.10 | Full suite (best) | Medium | Microsoft enterprise, regulated |

| RunPod | 38–46% | No | No | $0.35–0.52 | None | Low | Fine-tuning, inference serving |

| GMI Cloud | 46–54% | Yes (HDR) | No | $0.36–0.50 | SOC 2 | Low-Med | Inference-first deployments |

| Oracle OCI | 44–52% | RoCE v2 | No | $0.38–0.50 | SOC 2, HIPAA | Medium | Data-heavy training, zero egress |

| OVHcloud | 40–48% | Partial | No | $0.45–0.60 | GDPR | Medium | EU-resident workloads |

| Vast.ai | 28–45% | Varies | No | $0.25–0.40 | None | High | Cost-optimized experimentation |

FAQ: Engineering Guide to Best GPU Cloud Providers for AI

What is MFU and why is it more important than TFLOPS?

TFLOPS represents theoretical peak performance, while Model FLOP Utilization (MFU) measures the actual percentage delivered during training. High TFLOPS are useless if MFU is low; for example, an H100 with 30% MFU is less effective than a well-optimized A100. To find the best GPU cloud providers for AI, look for InfiniBand-connected clusters which achieve 48–58% MFU, compared to only 28–38% on Ethernet-connected PCIe setups.

Should I choose NVIDIA B200 or H100 SXM5 in 2026?

- Choose B200 for models over 70B parameters where memory bandwidth is critical, or for high-concurrency inference (1,000+ requests).

- Choose H100 SXM5 for models under 70B parameters or moderate concurrency, as it remains the cost-performance leader through 2026.

Do I really need InfiniBand for AI training?

Yes, if your model exceeds 13B parameters on multi-node setups. For a 7B model, InfiniBand HDR handles communication in ~1.1 seconds per step, while 10GbE Ethernet takes ~22 seconds. Over a full training run, Ethernet can introduce 6 hours of overhead for every 1,000 steps.

What is the difference between EFA, Google RDMA, and InfiniBand?

- InfiniBand: The industry standard with the lowest latency (<2 microseconds) and best portability.

- AWS EFA: Competitive performance but uses a proprietary protocol that limits portability.

- Google RDMA: Optimized specifically for Google’s unique cluster topology.

How should I model costs for training vs. inference?

For the best ROI, separate your workloads:

- Training: Use specialized clouds like Lambda or CoreWeave for batch-oriented, cost-optimized H100 clusters.

- Inference: Use auto-scaling serverless options like RunPod or GMI Cloud to avoid paying training-tier premiums for serving workloads.

Which providers offer HIPAA-compliant GPU clouds?

For healthcare workloads involving Protected Health Information (PHI), you must use AWS, Azure, or GCP. These hyperscalers provide the required Business Associate Agreements (BAA) and audit logging. Specialized clouds like CoreWeave are currently pursuing these certifications but are not yet fully certified.

What is the best GPU for Stable Diffusion XL (SDXL) in 2026?

- Moderate Volume: The L40S on RunPod or Lambda offers the best cost-per-image throughput.

- High Concurrency: The A100 80GB is superior due to VRAM headroom for larger batch sizes.

Final Verdict: The 2026 AI Infrastructure Strategy

For most AI engineering teams in 2026, the optimal strategy is a two-tier architecture: utilizing specialized clouds (CoreWeave or Lambda Cloud) for heavy training and inference-optimized platforms (RunPod Serverless or GMI Cloud) for production serving.

Paying hyperscaler rates for raw H100 compute is no longer defensible. AWS, Azure, and GCP should be reserved exclusively for workloads where compliance (HIPAA/FedRAMP), managed MLOps tooling, or immediate B200/GB200 access justifies the 3–4x cost premium.

Quick Selection Guide

- For LLM Pre-training & Large Fine-tuning:CoreWeave. With InfiniBand NDR fabric and documented MFU above 50%, it is the gold standard for production training.

- Estimated Budget: $0.32–$0.48 per 1B tokens trained.

- For Cost-Optimized Single-Node Training:Lambda Cloud. Offering H100s at $1.99–$2.49/hr with zero-driver-friction environments, it is the lowest-cost path to H100 capacity.

- Estimated Budget: $0.28–$0.35 per 1B tokens trained.

- For Compliance & Managed Ecosystems: AWS (p5/UltraCluster) or GCP (A3 Ultra). Choose based on your existing ecosystem; accept the premium as a cost of regulation and operational convenience, not infrastructure efficiency.

- For Production Inference at Scale: Use CoreWeave for low-latency, high-concurrency needs, or RunPod Serverless / GMI Cloud for auto-scaled variable loads with zero operational overhead.