Picking the wrong GPU cloud can cost you thousands of dollars or, worse, slow down your AI project at a critical moment.

Whether you’re training a large language model, running Stable Diffusion at scale, fine-tuning an open-source model, or deploying real-time inference APIs, your choice of GPU cloud directly determines your cost per experiment, your iteration speed, and your production reliability.

Two platforms consistently rise to the top of developer discussions in 2026: RunPod and Paperspace.

Both offer cloud GPU access.

But they’re built around very different philosophies, and they serve different users.

In this in-depth comparison, we break down RunPod vs Paperspace across pricing, available GPUs, ease of use, MLOps tooling, and real-world AI workloads. If you’re also evaluating other providers, check out our full Best GPU Cloud Providers for AI in 2026 guide and our Vast.ai vs RunPod comparison for a wider view of the market.

RunPod vs Paperspace: Quick Verdict

RunPod is better for:

- Cheap GPU access

- RTX 4090 workloads

- Stable Diffusion

- AI startups

Paperspace is better for:

- ML pipelines

- Jupyter notebooks

- Teams

- managed MLOps

Table of Contents

Quick Comparison: RunPod vs Paperspace at a Glance

| Category | RunPod | Paperspace |

| Starting Price | ~$0.19/hr (RTX 3090) | ~$0.45/hr (A4000) |

| GPU Selection | Very wide — 30+ GPU types | Moderate — 15+ GPU types |

| H100 / A100 Access | Yes — spot + on-demand | Yes — on-demand only |

| RTX 4090 Access | Yes — Community Cloud | Limited availability |

| Ease of Use | Moderate (dev-focused) | Easy (beginner-friendly) |

| Deployment Speed | Fast — 30 to 90 seconds | Fast — 1 to 3 minutes |

| Managed MLOps | Basic (Serverless) | Full (Gradient platform) |

| Notebooks / IDE | Limited (SSH focus) | Yes — Jupyter + VS Code |

| Persistent Storage | Yes — Network Volumes | Yes — per-plan included |

| Serverless Endpoints | Yes | Yes (Gradient Deployments) |

| Free Tier | No — credits only | Yes — limited GPU hours |

| Best For | Budget, inference, solo devs | MLOps, teams, beginners |

RunPod: Overview, Features, and Pricing

What Is RunPod?

RunPod is a GPU cloud marketplace launched in 2022 that lets developers rent GPU instances from a global network of data centers and individual server operators.

This decentralized model keeps prices significantly lower than hyperscalers like AWS or Google Cloud and even lower than most managed GPU platforms.

RunPod has matured into a full AI infrastructure platform with serverless endpoints, one-click model templates, persistent storage, and team management features.

It’s the go-to platform for cost-conscious developers and AI startups who want to move fast without burning their budget.

For a broader view of where RunPod fits in the GPU cloud market, see our Best GPU Cloud Providers for AI guide.

RunPod’s Main Features

- Secure Cloud and Community Cloud data center nodes vs. individual operators

- RunPod Serverless autoscaling AI endpoint deployment pay only per request

- One-click templates Stable Diffusion, Llama, Mistral, Whisper, and more

- Persistent storage via Network Volumes (~$0.07/GB/month)

- SSH, Jupyter, web terminal, and VS Code Server access

- Custom Docker container support with full environment control

- Spot pricing for interruptible workloads at the lowest possible cost

- Pod API for programmatic GPU management

GPUs Available on RunPod

| GPU | VRAM | Best For |

| NVIDIA RTX 4090 | 24 GB | Stable Diffusion, inference, fine-tuning |

| NVIDIA RTX 3090 | 24 GB | Budget training, image generation |

| NVIDIA A100 (40 GB) | 40 GB | LLM training, fine-tuning |

| NVIDIA A100 (80 GB) | 80 GB | Large-scale LLM training |

| NVIDIA H100 (80 GB) | 80 GB | Frontier model training |

| NVIDIA A40 | 48 GB | Professional ML + rendering |

| NVIDIA RTX A6000 | 48 GB | High-VRAM fine-tuning |

| AMD MI300X | 192 GB | Massive LLM training (select regions) |

RunPod Pricing Model

RunPod uses per-second hourly billing with no minimum commitment.

Prices vary between:

- Secure Cloud data center infrastructure, higher reliability, slightly higher price

- Community Cloud individual server owners, lower prices, variable reliability

- Spot pricing interruptible instances at the lowest rates

For detailed strategies to cut GPU costs significantly, read our Ultimate Guide to Cheap GPU Cloud which covers how to save 40–70% on AI training costs.

Best Use Cases for RunPod

- Stable Diffusion and ComfyUI at scale RTX 4090 readily available

- LLM fine-tuning and inference A100 and A6000 at low cost

- Serverless AI API endpoints with autoscaling

- Solo developers and researchers needing flexible, cheap GPU access

- AI startups wanting to ship fast without committing to reserved instances

Paperspace: Overview, Features, and Pricing

What Is Paperspace?

Paperspace, acquired by DigitalOcean in 2023, is a cloud GPU platform built for teams and organizations who need more than raw compute.

Its flagship product, Gradient, is a fully managed MLOps platform that includes Jupyter notebooks, ML pipelines, model deployment, and project management in a single interface.

Where RunPod appeals to developers comfortable with Docker and SSH, Paperspace lowers the barrier to entry considerably.

It’s the platform of choice for ML engineers who want polished tooling, reliable infrastructure, and a collaborative environment and are willing to pay a modest premium for it.

Paperspace competes most directly with managed GPU platforms. For a full comparison of the managed hosting landscape, see our Best Managed GPU Cloud Hosting review.

Paperspace’s Main Features

- Gradient Notebooks managed Jupyter environments with GPU support

- Gradient Deployments serverless model hosting and inference APIs

- Gradient Workflows ML pipeline orchestration with DAG support

- Persistent storage included up to per-plan limits

- Pre-built ML containers for PyTorch, TensorFlow, JAX, and Hugging Face

- Virtual Machines (Machines product) for desktop GPU workloads

- Team collaboration, role-based access, and project organization

- Backed by DigitalOcean’s global data center infrastructure

GPUs Available on Paperspace

| GPU | VRAM | Best For |

| NVIDIA A100 (80 GB) | 80 GB | Large-scale LLM training |

| NVIDIA A6000 | 48 GB | High-VRAM fine-tuning |

| NVIDIA A4000 | 16 GB | Mid-range training and inference |

| NVIDIA RTX 4000 Ada | 20 GB | Modern inference workloads |

| NVIDIA P5000 | 16 GB | Legacy training tasks |

| NVIDIA M4000 | 8 GB | Light workloads and learning |

Paperspace Pricing Model

Paperspace uses hourly billing for GPU instances and monthly subscription tiers for Gradient:

| Plan | Price | What You Get |

| Free | $0/month | Limited GPU hours, shared compute |

| Pro | $8/month | Faster GPUs, longer sessions |

| Growth | Custom | Team features, more storage |

| Enterprise | Custom | Compliance, SLA, dedicated support |

⚠️ One important note: Idle instances can still incur charges if not properly shut down, a common surprise for first-time users. Always stop your notebooks and pods when they are not in use.

Best Use Cases for Paperspace

- Teams building collaborative ML workflows and projects

- Beginners learning ML in a managed Jupyter environment

- MLOps pipelines and automated model training workflows

- Model deployment via Gradient Deployments

- Organizations needing enterprise compliance and access controls

Performance Comparison: RunPod vs Paperspace

RTX 4090 Availability and Performance

The RTX 4090 has become the workhorse GPU for Stable Diffusion, ComfyUI, and cost-effective LLM inference.

RunPod’s Community Cloud typically has strong RTX 4090 availability often multiple nodes across regions at any given time.

Paperspace has limited RTX 4090 supply, and pricing tends to be higher when available.

If RTX 4090 performance for image generation is your priority, also check our RTX 5090 vs 4090 for AI guide which breaks down whether upgrading hardware is worth it versus renting cloud GPU.

A100 and H100 Availability

For serious LLM training fine-tuning Llama, training custom transformers, or running distributed workloads you need A100 or H100 GPUs. Both platforms offer A100 access.

RunPod also provides H100 SXM nodes through its Secure Cloud, which Paperspace currently offers only on a limited basis.

Deployment and Startup Speed

RunPod typically launches pods in 30 to 90 seconds depending on GPU availability.

Paperspace Gradient Notebooks generally take 1 to 3 minutes, partly due to environment initialization and dependency loading.

For rapid iteration, RunPod’s speed advantage is noticeable.

Network and Infrastructure Quality

RunPod Secure Cloud nodes are hosted in professional data centers with high-bandwidth interconnects.

Community Cloud nodes vary in network quality depending on the individual operator.

Paperspace, backed by DigitalOcean’s infrastructure, offers consistent and reliable network performance across its fleet.

Head-to-Head Performance Table

| Metric | RunPod | Paperspace | Winner |

| RTX 4090 Availability | High (Community Cloud) | Low / Limited | RunPod |

| A100 40GB Access | Yes (Secure Cloud) | Yes | Tie |

| A100 80GB Access | Yes | Yes | Tie |

| H100 Access | Yes | Limited | RunPod |

| Pod Startup Speed | 30–90 seconds | 1–3 minutes | RunPod |

| Network Stability | Varies (community) | Consistent | Paperspace |

| Multi-GPU Support | Yes (up to 8x) | Yes (up to 8x) | Tie |

| Spot / Interruptible | Yes | Limited | RunPod |

| Uptime (Secure Cloud) | High | High | Tie |

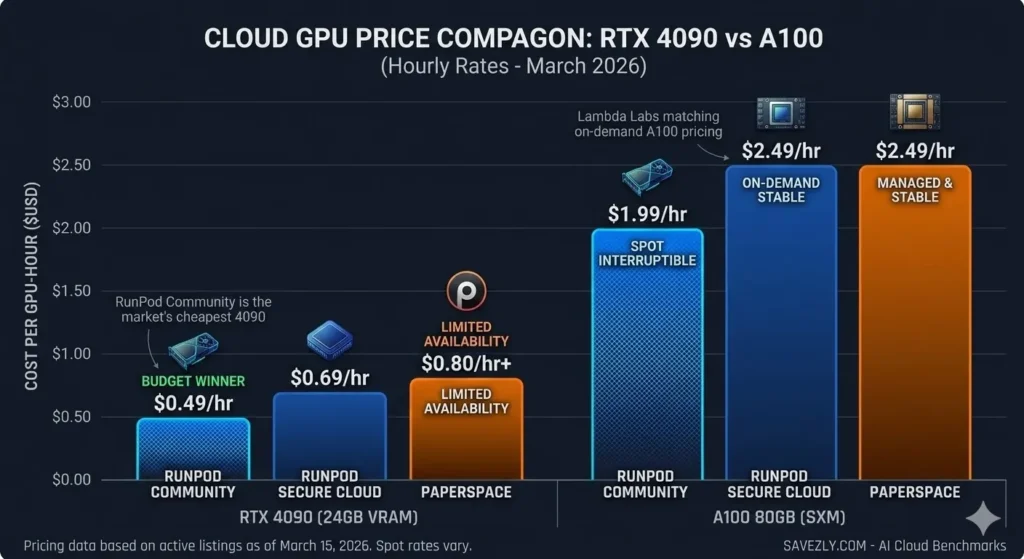

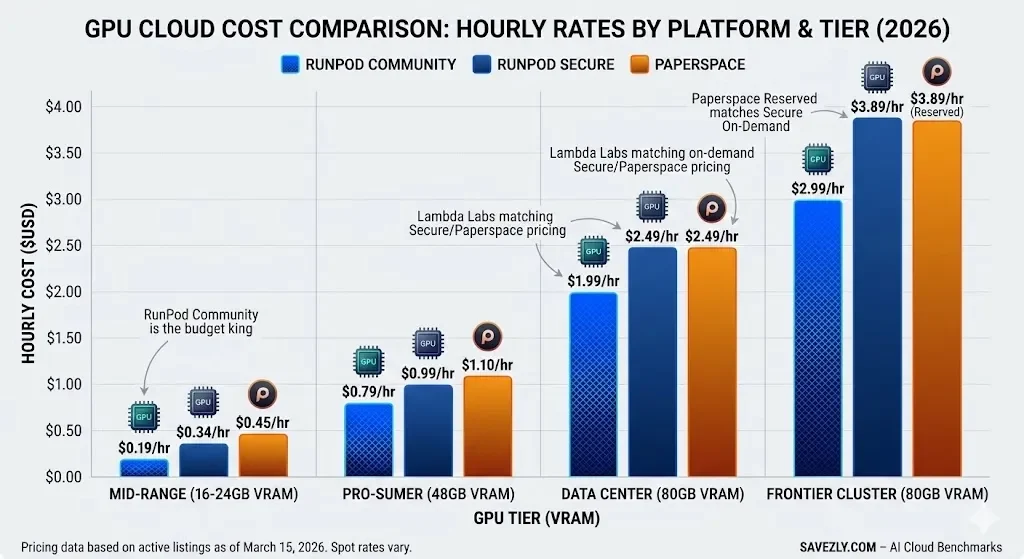

Pricing Comparison: RunPod vs Paperspace (2026 Estimates)

Pricing is the single most important factor for most individual developers and early-stage AI startups.

The table below shows estimated hourly costs as of early 2026.

⚠️ GPU cloud pricing is dynamic.

Always verify current rates directly on each platform before committing to a workload.

| GPU | RunPod Community | RunPod Secure | Paperspace |

| RTX 3090 (24 GB) | ~$0.19/hr | ~$0.34/hr | N/A |

| RTX 4090 (24 GB) | ~$0.49/hr | ~$0.69/hr | Limited / ~$0.80+/hr |

| A4000 (16 GB) | ~$0.29/hr | ~$0.44/hr | ~$0.45/hr |

| A100 40GB | ~$1.29/hr | ~$1.64/hr | ~$1.85/hr |

| A100 80GB | ~$1.99/hr | ~$2.49/hr | ~$2.49/hr |

| H100 80GB | ~$2.99/hr | ~$3.89/hr | Limited |

| A6000 (48 GB) | ~$0.79/hr | ~$0.99/hr | ~$1.10/hr |

💡 Bottom line: RunPod’s Community Cloud is almost always the cheapest option in the market. Paperspace is priced as a managed service you pay a premium for polished tooling and reliable infrastructure.

For aggressive cost optimization strategies, read our Ultimate Cheap GPU Cloud Guide.

Pros and Cons

RunPod

Paperspace

Use Case Comparison: Which Platform Wins?

| Use Case | Best Platform | Why |

| Stable Diffusion / ComfyUI | RunPod | RTX 4090 + low cost + SD templates out-of-the-box |

| LLM Fine-Tuning (small models) | RunPod | Cheaper A100/A6000 + Docker-native workflow |

| LLM Training (large-scale) | Tie | Both offer A100; RunPod has H100 edge |

| Managed ML Notebooks | Paperspace | Gradient Notebooks are best-in-class |

| AI Startups (early stage) | RunPod | Community Cloud savings are significant |

| Team ML Workflows | Paperspace | Pipelines, collaboration, and deploys in one place |

| Inference API Endpoints | RunPod | Serverless is fast, cheap, and autoscaling |

| Beginners Learning ML | Paperspace | Lower barrier, free tier, excellent guides |

| Desktop GPU Workloads | Paperspace | Machines product is purpose-built for this |

| Cost Optimization | RunPod | Community Cloud pricing is hard to beat |

For AI image generation use cases, Stable Diffusion on RunPod pairs well with our roundup of the 12 Best Free AI Image Generators Without Watermarks in 2026 useful if you’re comparing hosted vs self-hosted generation costs.

Final Verdict: RunPod vs Paperspace

RunPod wins on price and GPU variety.

Paperspace wins on usability and MLOps.

Choose RunPod if: You’re a developer or researcher comfortable with Docker and SSH, need cheap GPU access, want RTX 4090 or H100 availability, or are building serverless AI inference APIs.

RunPod is the go-to platform for cost-efficiency and flexibility in 2026.

For a broader set of budget-first options, see our Cheap GPU Cloud guide.

Choose Paperspace if: You’re a team building a full ML workflow, a beginner who wants managed Jupyter notebooks, or an organization that needs pipelines, deployments, and collaboration in one place. Paperspace is worth the premium for the integrated MLOps experience.

For a full comparison of managed platforms, read our Managed GPU Cloud Hosting review.

For most solo developers and early-stage AI startups, RunPod will save money and provide more GPU variety.

For teams building production ML systems who value workflow tooling over raw price, Paperspace is the stronger long-term platform.

🔗 Related Reading on Savezly

- Best GPU Cloud Providers for AI — 2026 Rankings — Full ranked guide to 10+ providers

- Vast.ai vs RunPod (2026) — Two decentralized GPU platforms head-to-head

- Best Managed GPU Cloud Hosting — For teams and enterprise AI workloads

- Cheap GPU Cloud: Save 40–70% — Budget strategies for AI developers

RTX 5090 vs 4090 for AI — Is upgrading local hardware worth it vs renting cloud GPU?

Frequently Asked Questions (FAQ)

Is RunPod cheaper than Paperspace?

Yes

in most cases RunPod is significantly cheaper than Paperspace, especially for high-end GPUs like the RTX 4090 and A100.

RunPod’s Community Cloud can be 30–50% less expensive.

Paperspace charges a premium for its managed infrastructure and workflow tooling.

Which is better for Stable Diffusion RunPod or Paperspace?

RunPod is the better choice for Stable Diffusion.

It has strong RTX 4090 availability, pre-built Stable Diffusion and ComfyUI templates, and lower cost per image generation hour.

For hosted alternatives, see our roundup of the best free AI image generators in 2026.

Does RunPod support serverless inference?

Yes.

RunPod Serverless lets you deploy AI models as autoscaling API endpoints.

You pay only when requests are processed making it highly cost-effective for variable inference workloads like text generation or image synthesis.

Cold start times are typically under 3 seconds.

Does Paperspace have a free tier?

Yes.

Paperspace Gradient offers a Free tier with limited GPU hours per month enough for learning and light experimentation.

For serious workloads you’ll need Gradient Pro ($8/month) or higher.

The Pro plan unlocks faster GPUs and removes session time limits.

Can I run open-source LLMs like Llama or Mistral on both platforms?

Absolutely.

Both RunPod and Paperspace support running open-source LLMs.

RunPod makes it easy with ready-made templates and cheap A100 or RTX 4090 access.

Paperspace’s Gradient Notebooks offer a comfortable Jupyter-based environment for experimenting with LLMs interactively.

How does RunPod compare to Vast.ai?

RunPod and Vast.ai are both decentralized GPU marketplaces, but RunPod has a more polished interface, stronger reliability guarantees, and better serverless infrastructure.

For a full head-to-head breakdown, read our Vast.ai vs RunPod 2026 comparison.

Which platform is better for AI startups?

Early-stage startups should default to RunPod for cost efficiency.

Growth-stage teams building production ML systems may prefer Paperspace’s Gradient for pipeline and deployment tooling or consider combining both.

For a full provider comparison, see our Best GPU Cloud Providers for AI guide.

Does RunPod have H100 GPUs?

Yes.

RunPod offers H100 SXM and H100 PCIe nodes through its Secure Cloud on select data centers. Availability varies by region.

H100 access on Paperspace is more limited.

If H100 is critical for your work, RunPod is the safer bet.

Published by Savezly.com — your 2026 authority for AI infrastructure, GPU cloud insights, and performance optimization.

Pricing and availability data is approximate and subject to change.

Always verify current rates on each platform before committing to a workload.